监测2.0桥梁版安装

一、部署清单

1.1 部署服务器

单机:最低配置–8C32G

系统:Centos 7.5

IP地址:192.168.56.61

1.2 部署中间件

| 序号 | 软件 | 版本号 | 部署方式 |

|---|---|---|---|

| 1 | Docker | 20.10.23 | 压缩包安装 |

| 2 | kakfa | 3.2.1 | 压缩包安装 |

| 3 | redis | 5.0.8 | 容器安装 |

| 4 | minio | 20230527 | 压缩包安装 |

| 5 | flink | 容器安装 | |

| 6 | mysql | 8.0.26 | 容器安装 |

| 7 | clickhouse | 22.2.3.5 | yum包安装 |

| 8 | emqx | 4.4.17 | yum包安装 |

| 9 | nacos | 2.2.2 | 压缩包安装 |

| 10 | docker-compose | 压缩包安装 |

1.3 部署业务

| 序号 | 软件 | 版本号 | 部署方式 |

|---|---|---|---|

| 1 | hi-ims-standard | V2.2.3.4 | 容器安装 |

| 2 | hi-ims-job-server | V2.2.3.4 | 容器安装 |

| 3 | hi-ims-gateway | V2.2.3.4 | 容器安装 |

| 4 | hi-ims-web | V2.2.3.4 | 容器安装 |

| 5 | hi-ims-init | V2.2.3.4 | 容器安装 |

二、环境部署

2.1 部署规划

| 序号 | 应用名称 | 端口规划 |

|---|---|---|

| 1 | kafka | 9092 |

| 2 | redis | 7001-7006 |

| 3 | MySQL | 3306 |

| 4 | clickhouse | 8123 |

| 5 | emqx | 1883 |

| 6 | nacos | 8848 |

| 7 | minio | |

2.2 部署中间件模块

2.2.1 系统优化

关闭SElinux

查看selinux状态

# getenforce

Enforcing

临时关闭selinux

# setenforce 0

# getenforce

Permissive

永久关闭禁止开机启动

# vim /etc/selinux/config

将SELINUX=enforcing改为SELINUX=disabled,重启主机生效。

关闭防火墙

临时关闭防火墙

# systemctl stop firewalld

禁止防火墙开机启动,永久关闭

# systemctl disable firewalld

Removed symlink /etc/systemd/system/dbus-org.fedoraproject.FirewallD1.service.

Removed symlink /etc/systemd/system/basic.target.wants/firewalld.service.

关闭swap

临时关闭

# swapoff -a

# vi /etc/fstab

将配置有swap的一列加上#号注释

#/dev/mapper/centos-swap swap swap defaults 0 0

禁用大内存页

vi /etc/rc.local

echo never > /sys/kernel/mm/transparent_hugepage/enabled

echo ‘vm.overcommit_memory = 1’ >> /etc/sysctl.conf

echo ‘net.core.somaxconn = 1024’ >> /etc/sysctl.conf

sysctl -p

2.2.2 部署Docker

## 上传软件包:docker-20.10.23.tgz

tar xzvf docker-20.10.23.tgz -C /tmp

cp /tmp/docker/* /usr/local/bin &>/dev/null && rm -rf /tmp/docker

## 配置docker启动服务

cat << EOF > /etc/systemd/system/docker.service

[Unit]

Description=Docker Application Container Engine

Documentation=https://docs.docker.com

After=network-online.target firewalld.service

Wants=network-online.target

[Service]

Type=notify

ExecStart=/usr/local/bin/dockerd

ExecReload=/bin/kill -s HUP \$MAINPID

LimitNOFILE=infinity

LimitNPROC=infinity

LimitCORE=infinity

TimeoutStartSec=0

Delegate=yes

KillMode=process

Restart=on-failure

StartLimitBurst=3

StartLimitInterval=60s

[Install]

WantedBy=multi-user.target

EOF

cat << EOF > /etc/systemd/system/docker.socket

[Unit]

Description=Docker Socket for the API

PartOf=docker.service

[Socket]

ListenStream=/run/docker.sock

SocketMode=0660

SocketUser=root

SocketGroup=docker

[Install]

WantedBy=sockets.target

EOF

## docker配置文件

mkdir /etc/docker

cat << EOF > /etc/docker/daemon.json

{

“data-root”:”/mnt/docker”,

“log-driver”:”json-file”,

“log-opts”: {“max-size”:”100m”, “max-file”:”3″}

}

EOF

groupadd docker

systemctl daemon-reload && systemctl enable docker && systemctl start docker

systemctl status docker

2.2.3 部署kafka

## 检测是否安装java

# java –version

-bash: java: 未找到命令

如有该提示,则需要安装java

## 安装java

tar -zxf jdk-11.0.19_linux-x64_bin.tar.gz -C /opt/

PATH_NEW=`cat ~/.bash_profile |grep PATH=`

sed -i “s@${PATH_NEW}@${PATH_NEW}:/opt/jdk-11.0.19/bin@g” ~/.bash_profile

echo ‘export JAVA_HOME=/opt/jdk-11.0.19’ >> ~/.bash_profile

source ~/.bash_profile

## 安装kafka

mkdir /ZHD/apps/kafka -p

mkdir /ZHD/data/kafka -p

tar -zxf kafka_2.13-3.2.1.tar.gz -C /ZHD/apps/kafka

## 创建启动服务

cat > /etc/systemd/system/kafka.service << EOT

[Unit]

Description=Apache Kafka server (broker)

After=network.target zookeeper.service

[Service]

Type=simple

Environment=JAVA_HOME=/opt/jdk-11.0.19/

User=root

Group=root

ExecStart=/ZHD/apps/kafka/bin/kafka-server-start.sh /ZHD/apps/kafka/config/kraft/server.properties

ExecStop=/ZHD/apps/kafka/bin/kafka-server-stop.sh

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOT

## 编辑配置文件

vi /ZHD/apps/kafka/config/kraft/server.properties

node.id=1

controller.quorum.voters=1@192.168.56.61:9093

listeners=PLAINTEXT://192.168.56.61:9092,CONTROLLER://192.168.56.61:9093

advertised.listeners=PLAINTEXT://192.168.56.61:9092

log.dirs=/ZHD/data/kafka

## 格式化数据目录

KAFKA_CLUSTER_ID=`/ZHD/apps/kafka/bin/./kafka-storage.sh random-uuid`

/ZHD/apps/kafka/bin/./kafka-storage.sh format -t ${KAFKA_CLUSTER_ID} -c /ZHD/apps/kafka/config/kraft/server.properties

## 启动kafka

systemctl enable kafka

systemctl start kafka

2.2.4 部署redis

提供两种方式,选其中一种部署

## 容器部署

cat > /ZHD/data/redis/7001/redis.conf << EOT

appendonly yes

requirepass zhdgps@123

logfile ‘/data/redis_7001.log’

save 900 1

save 300 10

save 60 10000

dbfilename dump.rdb

dir /data/

EOT

docker run -it -d -p 7001:6379 \

-v /ZHD/data/redis/7001:/data \

-v /ZHD/data/redis/7001:/usr/local/etc/redis \

-v /etc/localtime:/etc/localtime \

–name redis_7001 redis:5.0.8 \

redis-server /usr/local/etc/redis/redis.conf

## 源码包部署

## redis是源码包安装,需要安装开发编译工具

yum group install -y ‘Development Tools’

tar -zxf redis-5.0.8.tar.gz -C /tmp/

cd /tmp/redis-5.0.8

make && make install

## 创建redis数据目录与配置文件

mkdir /ZHD/data/redis/7001 -p

cp redis.conf /ZHD/data/redis/7001/redis.conf

## 编辑配置文件

vi /ZHD/data/redis/7001/redis.conf

bind 0.0.0.0

port 7001

daemonize yes

supervised systemd

pidfile /var/run/redis_7001.pid

logfile “/ZHD/data/redis/7001/redis_7001.log”

syslog-enabled yes

syslog-ident redis

syslog-facility local0

appendonly yes

requirepass zhdgps@123

## 启动redis

cd /ZHD/data/redis/7001/

redis-server redis.conf

2.2.5 部署minio

## 安装软件

mkdir /ZHD/apps/minio -p

mkdir /ZHD/data/minio -p

chmod +x minio-20230527

cp -rp minio-20230527 /ZHD/apps/minio/minio

## 创建启动脚本

cat > /ZHD/apps/minio/minio_start.sh << EOT

nohup /ZHD/apps/minio/minio server /ZHD/data/minio –console-address “:35555” –address “:9906” > /ZHD/apps/minio/minio.log 2>&1 &

EOT

## 创建停止脚本

cat > /ZHD/apps/minio/minio_stop.sh << EOT

kill -9 `ps -ef |grep minio | grep -v grep | awk ‘{print $2}’`

EOT

chmod +x /ZHD/apps/minio/minio_start.sh

chmod +x /ZHD/apps/minio/minio_stop.sh

## 启动minio

/ZHD/apps/minio/minio_start.sh

2.2.6 部署emqx

2.2.6.1 容器部署

mkdir /ZHD/data/emqx -p

mkdir /ZHD/apps/emqx -p

mkdir /ZHD/data/emqx/logs -p

## 获取配置目录文件

docker run -d –name emqx -p 1883:1883 -p 8081:8081 -p 8083:8083 -p 8084:8084 -p 8883:8883 -p 18083:18083 emqx/emqx:4.4.19

Time=`date ‘+%Y%m%d’`

docker cp emqx:/opt/emqx/etc/ /ZHD/apps/emqx

mv /ZHD/apps/emqx/acl.conf /ZHD/apps/emqx/acl.conf_bak_${Time}

mv /ZHD/apps/emqx/emqx.conf /ZHD/apps/emqx/emqx.conf_bak_${Time}

mv /ZHD/apps/emqx/plugins/emqx_auth_redis.conf /ZHD/apps/emqx/plugins/emqx_auth_redis.conf_bak_${Time}

cp -rp config/emqx_acl.conf /ZHD/apps/emqx/acl.conf

cp -rp config/emqx.conf /ZHD/apps/emqx/emqx.conf

cp -rp config/emqx_auth_redis.conf /ZHD/apps/emqx/plugins/emqx_auth_redis.conf

## 编辑文件

## 重建emqx

docker stop emqx

docker rm emqx

docker run -d –name emqx -p 1883:1883 \

-p 8081:8081 -p 8083:8083 -p 8084:8084 \

-p 8883:8883 -p 18083:18083 \

-v /ZHD/apps/emqx:/opt/emqx/etc \

-v /ZHD/data/emqx:/var/lib/emqx \

-v /ZHD/data/emqx/logs:/var/log/emqx \

emqx/emqx:4.4.19

2.2.6.2 本地部署

## 安装软件

yum install -y epel-release

yum install -y openssl11 openssl11-devel

yum install -y emqx-4.4.17-otp24.3.4.2-1-el7-amd64.rpm

## 复制配置文件

Datatime=`date ‘+%Y%m%d’`

mv /etc/emqx/acl.conf /etc/emqx/acl.conf_bak_${Datatime}

mv /etc/emqx/emqx.conf /etc/emqx/emqx.conf_bak_${Datatime}

mv /etc/emqx/plugins/emqx_auth_redis.conf /etc/emqx/plugins/emqx_auth_redis.conf_bak_${Datatime}

cp config/emqx_acl.conf /etc/emqx/acl.conf

cp config/emqx.conf /etc/emqx/emqx.conf

cp config/emqx_auth_redis.conf /etc/emqx/plugins/emqx_auth_redis.conf

## 编辑/etc/emqx/emqx.conf

node.name = pro@192.168.56.61

## 编辑/etc/emqx/plugins/emqx_auth_redis.conf

auth.redis.type = single

auth.redis.server = 192.168.56.61:7001

auth.redis.password =zhdgps@123

## 编辑/etc/emqx/plugins/emqx_web_hook.conf

web.hook.enable_pipelining = false

## 启动emqx服务

systemctl start emqx

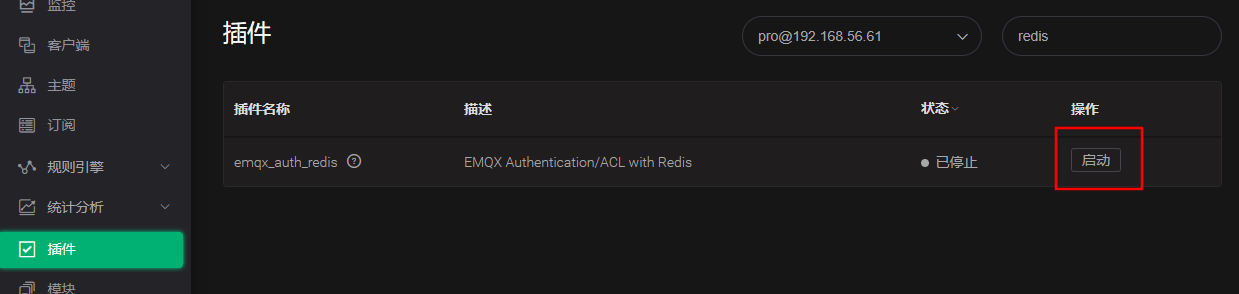

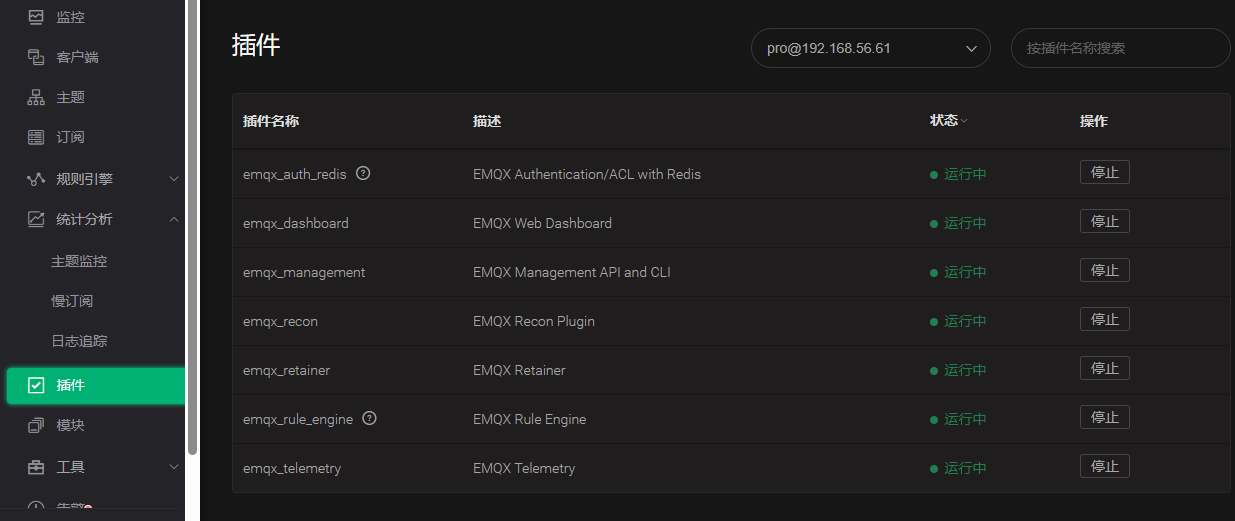

emqx开启连接redis模块

http://192.168.56.61:18083

账号:admin 密码:public

2.2.7 部署MySQL

提供两种方式:容器安装与本地安装

2.2.7.1 容器部署

## 容器安装

mkdir /ZHD/data/mysql/config -p

mkdir /ZHD/data/mysql/data -p

mkdir /ZHD/data/mysql/logs -p

## 创建配置文件

cat > /ZHD/data/mysql/config/my.cnf << EOT

[mysqld]

sql_mode=’STRICT_TRANS_TABLES,NO_ZERO_IN_DATE,NO_ZERO_DATE,ERROR_FOR_DIVISION_BY_ZERO,NO_ENGINE_SUBSTITUTION’

max_connect_errors=1000

max_connections=1000

mysqlx_max_connections=1000

log-bin=binlog

server-id=3306

EOT

## 创建容器

docker run -it -d -p 3306:3306 \

-v /ZHD/data/mysql/data:/var/lib/mysql \

-v /ZHD/data/mysql/config/my.cnf:/etc/my.cnf \

-e MYSQL_ROOT_PASSWORD=Zhdgps@123 \

–name mysql_3306 mysql:8.0.26

2.2.7.2 本地部署

mkdir /ZHD/apps/ -p

mkdir /ZHD/data/mysql -p

tar -zxf mysql-8.0.26-el7-x86_64.tar.gz -C /ZHD/apps/

ln -s /ZHD/apps/mysql-8.0.26-el7-x86_64 /ZHD/apps/mysql

## 创建环境变量

OLDPATH=`cat /root/.bash_profile |grep PATH=`

NEWPATH=”${OLDPATH}:/ZHD/apps/mysql/bin”

sed -i “s@${OLDPATH}@${NEWPATH}@g” /root/.bash_profile

source /root/.bash_profile

## 创建启动服务

cp -rp /ZHD/apps/mysql/support-files/mysql.server /etc/init.d/mysql

sed -i ‘s@^basedir=@basedir=/ZHD/apps/mysql@g’ /etc/init.d/mysql

sed -i ‘s@^datadir=@datadir=/ZHD/data/mysql@g’ /etc/init.d/mysql

sed -i ‘s@conf=/etc/my.cnf@conf=/ZHD/apps/mysql/my.cnf@g’ /etc/init.d/mysql

sed -i ‘s@Start daemon@Start daemon \nln -s /var/lib/mysql/mysql.sock /tmp/mysql.sock@g’ /etc/init.d/mysql

## 创建配置文件

cat > /ZHD/apps/mysql/my.cnf << EOT

[mysqld]

sql_mode=’STRICT_TRANS_TABLES,NO_ZERO_IN_DATE,NO_ZERO_DATE,ERROR_FOR_DIVISION_BY_ZERO,NO_ENGINE_SUBSTITUTION’

datadir=/ZHD/data/mysql

basedir=/ZHD/apps/mysql

socket=/var/lib/mysql/mysql.sock

max_connect_errors=1000

max_connections=1000

mysqlx_max_connections=1000

log-bin=binlog

server-id=3306

log-error=/var/log/mysqld.log

pid-file=/var/run/mysqld/mysqld.pid

EOT

## 创建启动用户与用户组

groupadd mysql

useradd -g mysql -s /bin/false mysql

mkdir -p /var/lib/mysql

mkdir -p /var/run/mysqld

touch /var/log/mysqld.log

chown mysql:mysql /var/log/mysqld.log

chown mysql:mysql /ZHD/data/mysql

chown mysql:mysql /var/run/mysqld

chown mysql:mysql /var/lib/mysql

mysqld –defaults-file=/ZHD/apps/mysql/my.cnf –initialize –user=mysql

## 打印root登录密码

cat /var/log/mysqld.log | grep password

## 移除默认配置文件

mv /etc/my.cnf /etc/my.cnf_bak

## 创建开机自启

chkconfig mysql on –level 3

systemctl start mysql

mysql -uroot -p

## 修改root密码

alter user ‘root’@’localhost’ identified by ‘Zhdgps@123′;

update mysql.user set host=’%’ where user=’root’;

flush privileges;

2.2.8 部署clickhouse

2.2.8.1 容器部署

mkdir /ZHD/data/clickhouse/data -p

mkdir /ZHD/data/clickhouse/logs -p

mkdir /ZHD/data/clickhouse/config -p

## 获取配置目录,以供后期参数优化

docker run -d -p 8123:8123 -p 9000:9000 –name clickhouse-server –ulimit nofile=262144:262144 \

-v /ZHD/data/clickhouse/data:/var/lib/clickhouse/ \

-v /ZHD/data/clickhouse/logs:/var/log/clickhouse-server/ \

clickhouse/clickhouse-server:22.2.3.5

cd /ZHD/data/clickhouse/config

docker cp clickhouse-server:/etc/clickhouse-server/ ./

mv clickhouse-server/* .

rm -rf clickhouse-server

docker stop clickhouse-server && docker rm clickhouse-server

## 重建容器

docker run -d -p 8123:8123 -p 9000:9000 –name clickhouse-server –ulimit nofile=262144:262144 \

-v /ZHD/data/clickhouse/data:/var/lib/clickhouse/ \

-v /ZHD/data/clickhouse/logs:/var/log/clickhouse-server/ \

-v /ZHD/data/clickhouse/config:/etc/clickhouse-server/ \

-e CLICKHOUSE_PASSWORD=’Zhdgps@123′ \

clickhouse/clickhouse-server:22.2.3.5

2.2.8.2 本地部署

sudo yum install -y yum-utils

sudo yum-config-manager –add-repo https://packages.clickhouse.com/rpm/clickhouse.repo

sudo yum install -y clickhouse-server clickhouse-client

## 编辑user.xml

vi /etc/clickhose-server/user.xml

<password>Zhdgps@123</password>

vi /etc/clickhouse-server/config.xml

<listen_host>0.0.0.0</listen_host>

sudo systemctl enable clickhouse-server

sudo systemctl start clickhouse-server

sudo systemctl status clickhouse-server

2.2.9 部署nacos

2.2.9.1 软件部署

## 安装软件

mkdir /ZHD/apps/nacos -p

unzip nacos-server-2.2.2.zip

mv nacos/* /ZHD/apps/nacos/

## 编辑配置文件

cd /ZHD/apps/nacos/

cp -rp conf/application.properties conf/application.properties_bak

sed -i ‘s@# spring.datasource.platform=mysql@spring.datasource.platform=mysql@g’ conf/application.properties

sed -i ‘s@# db.num=1@db.num=1@g’ conf/application.properties

sed -i ‘s@# db.url.0@db.url.0@g’ conf/application.properties

sed -i ‘s@# db.user.0=nacos@db.user.0=root@g’ conf/application.properties

sed -i ‘s%# db.password.0=nacos%db.password.0=Zhdgps@123%g’ conf/application.properties

## 配置nacos单机模式

sed -i ‘s#export MODE=”cluster”#export MODE=”standalone”#g’ bin/startup.sh

## 创建nacos数据库

echo ‘create database nacos’ | mysql -uroot -pZhdgps@123

## 执行nacos刷库脚本

mysql -uroot -pZhdgps@123 nacos < conf/mysql-schema.sql

## 启动nacos

bin/./startup.sh

2.2.9.2 nacos配置文件修改

由于都是单机部署,所以IP地址都均为:192.168.56.61

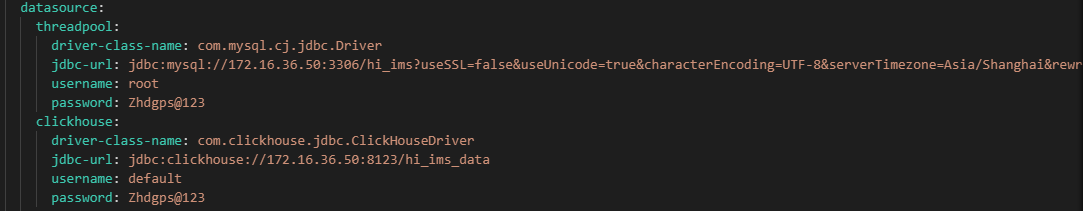

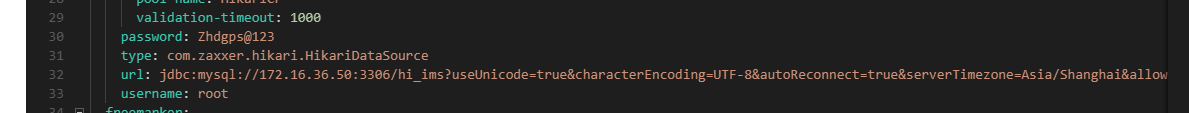

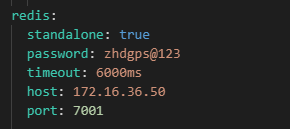

一、hi-ims-common.yaml

1、根据环境修改为生产的数据库地址、帐号、密码,如为192.168.56.61

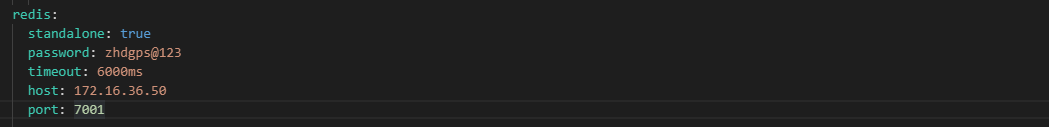

2、修改redis连接、密码,根据redis部署是单机或集群来填写参数

## 单机redis

redis:

standalone: true

password: zhdgps@123

timeout: 6000ms

host: 172.16.36.50

port: 7001

## 集群redis配置

redis:

password: zhdgps@123

timeout: 6000ms

cluster:

max-redirects: 3 #集群中重定向最大次数

nodes:

– 192.168.200.21:7001

– 192.168.200.21:7002

– 192.168.200.48:7003

– 192.168.200.48:7004

– 192.168.200.49:7005

– 192.168.200.49:7006

lettuce:

pool:

max-active: 1000

max-wait: -1ms

max-idle: 10

min-idle: 5

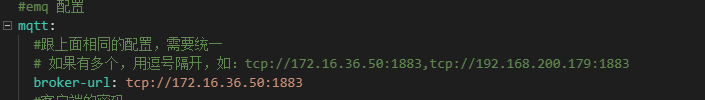

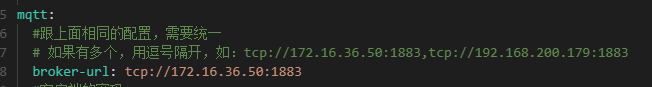

3、修改mqtt地址,tcp://192.168.56.61:1883

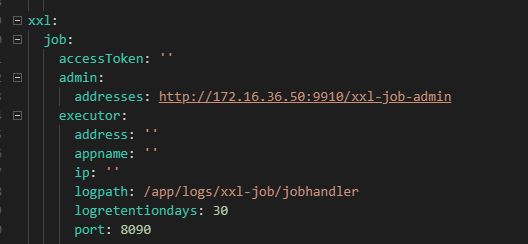

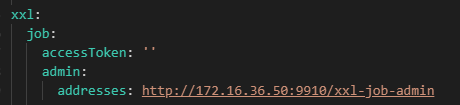

4、修改xxl-job-admin的连接地址为:http://192.168.56.61:9910/xxl-job-admin

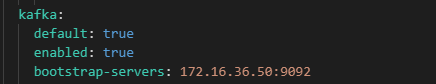

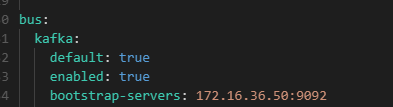

5、修改kafka地址,192.168.56.61:9092

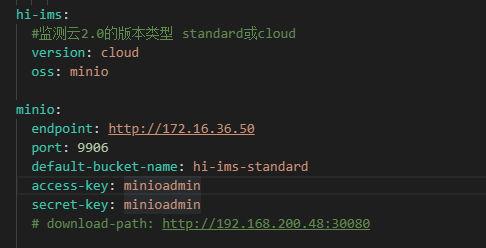

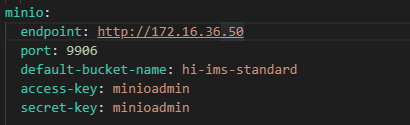

6、修改minio地址,192.168.56.61

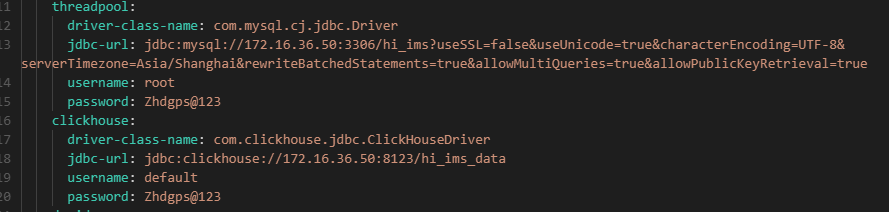

二、hi-ims-job-server.yaml

1、修改数据地址、帐号、密码

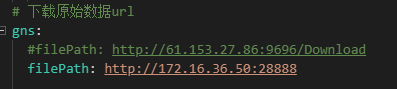

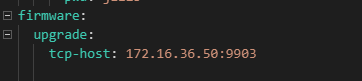

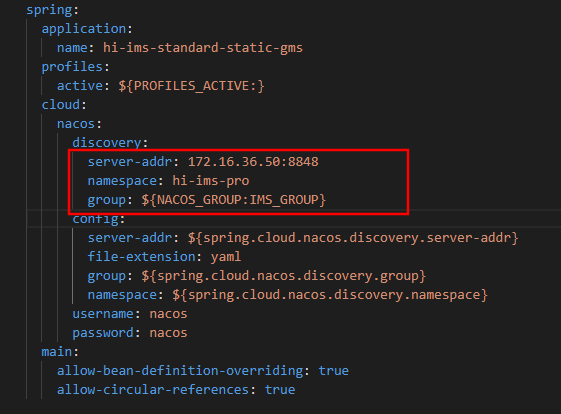

三、hi-ims-standard-static-gms.yaml

1、修改数据库地址、账号、密码

2、修改redis地址、密码,如果是集群,请参考hi-ims-common.yaml配置说明下的集群配置添加参数

3、修改minio地址

4、修改mqtt地址

5、修改 xxl-job地址

6、修改kafka地址

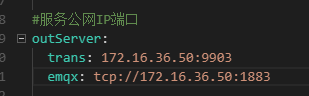

7、修改对外提供服务地址,如有公网填写为公网与映射端口

8、修改观测数据下载地址,如有公网填写为公网与映射端口

9、修改地址

四、hi-ims-gateway.yaml与hi-ims-init-service.yaml 正常无需修改

2.2.9.3 导入nacos配置文件

## 创建命名空间 hi-ims-pro

curl ‘http://172.16.36.50:8848/nacos/v1/console/namespaces?&accessToken=eyJhbGciOiJIUzI1NiJ9.eyJzdWIiOiJuYWNvcyIsImV4cCI6MTY1OTAwNTI0OH0.b8zxYTkMJGvVdfZ6k24LY7qzl5x72vLYOtGaHKU4tuQ’ \

-H ‘Accept: application/json, text/javascript, */*; q=0.01’ \

-H ‘Accept-Language: zh-CN,zh;q=0.9’ \

-H ‘Authorization: {“accessToken”:”eyJhbGciOiJIUzI1NiJ9.eyJzdWIiOiJuYWNvcyIsImV4cCI6MTY1OTAwNTI0OH0.b8zxYTkMJGvVdfZ6k24LY7qzl5x72vLYOtGaHKU4tuQ”,”tokenTtl”:18000,”globalAdmin”:true,”username”:”nacos”}’ \

-H ‘Connection: keep-alive’ \

-H ‘Content-Type: application/x-www-form-urlencoded’ \

-H ‘Cookie: sidebar_collapsed=false; sfcsrftoken=XMq8HLO5knJyucoDaYcdA2zdsJKazkJLXDeNd3Gd096UOOseLuwqwTOmAvXXPGDN; nc_sameSiteCookielax=true; nc_sameSiteCookiestrict=true; e5BC_2132_smile=1D1; code-server-session=%24argon2id%24v%3D19%24m%3D4096%2Ct%3D3%2Cp%3D1%24vUxO7G%2FABbMnSCC4LoIEdA%24%2BTt6CP9oPCcXva5SSCMlagpGglJElfmiWeZRxYUVvZ0; e5BC_2132_auth=cda5m6vqir0ZZtpLE6Qj_S4cvrzFYI5nKr0TTD8M-LOi6OM2LDOd9eSSxyPhV5xtmJoFKXt6s3bA1uGySoSW; e5BC_2132_ulastactivity=1553TxIm5H-QefG2-FU19R-Hwvevy2m_JL_zFjvQvDPGu8H_A1m8; token=eyJhbGciOiJIUzI1NiJ9.eyJUZWxlcGhvbmUiOiIxIiwiVXNlcm5hbWUiOiJ3aiIsImV4cCI6MTY1MzkwMTA3NjMzMiwidXNlcklkIjo2LCJpYXQiOjE2NTM2NDE4NzYzMzJ9.-Pz3VJfzGd3jO7RFrrIGLPxa9GKkBoloIGsp15iFcyc; HFish=MTY1ODczNjU5M3xEdi1CQkFFQ180SUFBUkFCRUFBQVZQLUNBQUlHYzNSeWFXNW5EQW9BQ0dselgyeHZaMmx1Qm5OMGNtbHVad3dIQUFWaFpHMXBiZ1p6ZEhKcGJtY01CZ0FFZEdsdFpRWnpkSEpwYm1jTUZRQVRNakF5TWkwd055MHlOU0F4Tmpvd09UbzFNdz09fCcBQ8CEl0GcgAhYCR7HrB7b4-jSj0bDjO6gfc0dVau2’ \

-H ‘Origin: http://’$middleware_ip’:18848′ \

-H ‘Referer: http://’$middleware_ip’:18848/nacos/’ \

-H ‘User-Agent: Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/101.0.4951.67 Safari/537.36’ \

-H ‘X-Requested-With: XMLHttpRequest’ \

–data ‘customNamespaceId=hi-ims-rpc&namespaceName=hi-ims-pro&namespaceDesc=hi-ims-pro&namespaceId=hi-ims-pro’ \

–compressed \

–insecure

## 创建命名空间 hi-ims-rpc

curl ‘http://172.16.36.50:8848/nacos/v1/console/namespaces?&accessToken=eyJhbGciOiJIUzI1NiJ9.eyJzdWIiOiJuYWNvcyIsImV4cCI6MTY1OTAwNTI0OH0.b8zxYTkMJGvVdfZ6k24LY7qzl5x72vLYOtGaHKU4tuQ’ \

-H ‘Accept: application/json, text/javascript, */*; q=0.01’ \

-H ‘Accept-Language: zh-CN,zh;q=0.9’ \

-H ‘Authorization: {“accessToken”:”eyJhbGciOiJIUzI1NiJ9.eyJzdWIiOiJuYWNvcyIsImV4cCI6MTY1OTAwNTI0OH0.b8zxYTkMJGvVdfZ6k24LY7qzl5x72vLYOtGaHKU4tuQ”,”tokenTtl”:18000,”globalAdmin”:true,”username”:”nacos”}’ \

-H ‘Connection: keep-alive’ \

-H ‘Content-Type: application/x-www-form-urlencoded’ \

-H ‘Cookie: sidebar_collapsed=false; sfcsrftoken=XMq8HLO5knJyucoDaYcdA2zdsJKazkJLXDeNd3Gd096UOOseLuwqwTOmAvXXPGDN; nc_sameSiteCookielax=true; nc_sameSiteCookiestrict=true; e5BC_2132_smile=1D1; code-server-session=%24argon2id%24v%3D19%24m%3D4096%2Ct%3D3%2Cp%3D1%24vUxO7G%2FABbMnSCC4LoIEdA%24%2BTt6CP9oPCcXva5SSCMlagpGglJElfmiWeZRxYUVvZ0; e5BC_2132_auth=cda5m6vqir0ZZtpLE6Qj_S4cvrzFYI5nKr0TTD8M-LOi6OM2LDOd9eSSxyPhV5xtmJoFKXt6s3bA1uGySoSW; e5BC_2132_ulastactivity=1553TxIm5H-QefG2-FU19R-Hwvevy2m_JL_zFjvQvDPGu8H_A1m8; token=eyJhbGciOiJIUzI1NiJ9.eyJUZWxlcGhvbmUiOiIxIiwiVXNlcm5hbWUiOiJ3aiIsImV4cCI6MTY1MzkwMTA3NjMzMiwidXNlcklkIjo2LCJpYXQiOjE2NTM2NDE4NzYzMzJ9.-Pz3VJfzGd3jO7RFrrIGLPxa9GKkBoloIGsp15iFcyc; HFish=MTY1ODczNjU5M3xEdi1CQkFFQ180SUFBUkFCRUFBQVZQLUNBQUlHYzNSeWFXNW5EQW9BQ0dselgyeHZaMmx1Qm5OMGNtbHVad3dIQUFWaFpHMXBiZ1p6ZEhKcGJtY01CZ0FFZEdsdFpRWnpkSEpwYm1jTUZRQVRNakF5TWkwd055MHlOU0F4Tmpvd09UbzFNdz09fCcBQ8CEl0GcgAhYCR7HrB7b4-jSj0bDjO6gfc0dVau2’ \

-H ‘Origin: http://’$middleware_ip’:18848′ \

-H ‘Referer: http://’$middleware_ip’:18848/nacos/’ \

-H ‘User-Agent: Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/101.0.4951.67 Safari/537.36’ \

-H ‘X-Requested-With: XMLHttpRequest’ \

–data ‘customNamespaceId=hi-ims-rpc&namespaceName=hi-ims-rpc&namespaceDesc=hi-ims-rpc&namespaceId=hi-ims-rpc’ \

–compressed \

–insecure

## 上传nacos 业务配置文件

curl –location –request POST ‘http://172.16.36.50:8848/nacos/v1/cs/configs?import=true&namespace=’hi-ims-pro” –form ‘policy=OVERWRITE’ –form ‘file=@”/tmp/nacos_config_V2.2.3.zip”‘

2.2.10 部署Flink

## 创建应用目录

mkdir -p /ZHD/apps/flink/conf

chmod 777 /ZHD/apps/flink

cd /ZHD/apps/flink/conf

# 思莫特配置文件

vi smt-conf.yaml

flink.kafka.bootstrap-servers: 172.16.36.50:9092

flink.kafka.group-id: flink_smt

flink.kafka.topics: smt

flink.clickhouse.driver-class-name: ru.yandex.clickhouse.ClickHouseDriver

flink.clickhouse.url: jdbc:clickhouse://172.16.36.50:8123/hi_ims_data

flink.clickhouse.username: default

flink.clickhouse.password: Zhdgps@123

flink.redis.address: 172.16.36.50:7001

flink.redis.password: zhdgps@123

# 浙江博远配置文件

vi ipcs-conf.yaml

flink.kafka.bootstrap-servers: 172.16.36.50:9092

flink.kafka.group-id: flink_ipcs

flink.kafka.topics: ipcs_eigenvalue,ipcs_vibrate

flink.clickhouse.driver-class-name: ru.yandex.clickhouse.ClickHouseDriver

flink.clickhouse.url: jdbc:clickhouse://172.16.36.50:8123/hi_ims_data

flink.clickhouse.username: default

flink.clickhouse.password: Zhdgps@123

flink.redis.address: 172.16.36.50:7001

flink.redis.password: zhdgps@123

chmod +x docker-compose

cp -rp docker-compose /usr/local/bin/docker-compose

## 容器yaml文件

vi flink-docker-compose.yml

version: “2.2”

services:

jobmanager:

image: flink:1.15.4-scala_2.12

ports:

– “18081:8081”

command: jobmanager

volumes:

– /ZHD/apps/flink/jobmanager/log:/opt/flink/log

– /ZHD/apps/flink/conf:/opt/flink/task-conf

– /ZHD/apps/flink/flink-web-upload:/opt/flink/flink-web-upload

– /ZHD/apps/flink/checkpoint:/opt/flink/checkpoint

environment:

– |

flink_PROPERTIES=

jobmanager.rpc.address: jobmanager

web.upload.dir: /opt/flink

state.backend: rocksdb

state.checkpoint-storage: filesystem

state.checkpoints.dir: file:///opt/flink/checkpoint

state.checkpoints.num-retained: 10

taskmanager:

image: flink:1.15.4-scala_2.12

depends_on:

– jobmanager

command: taskmanager

volumes:

– /ZHD/apps/flink/taskmanager/log:/opt/flink/log

– /ZHD/apps/flink/conf:/opt/flink/task-conf

– /ZHD/apps/flink/checkpoint:/opt/flink/checkpoint

scale: 3

environment:

– |

flink_PROPERTIES=

jobmanager.rpc.address: jobmanager

taskmanager.numberOfTaskSlots: 2

state.backend: rocksdb

state.checkpoint-storage: filesystem

state.checkpoints.dir: file:///opt/flink/checkpoint

state.checkpoints.num-retained: 10

## 启动flink进程

docker-compose -f flink-docker.yaml start

2.3 部署业务模块

2.3.1 业务配置文件编辑

2.3.1.1 创建业务目录

mkdir -p /ZHD/apps/zhd/bds-lbs-stream

mkdir -p /ZHD/apps/zhd/hi-ims-standard

mkdir -p /ZHD/apps/zhd/hi-ims-web

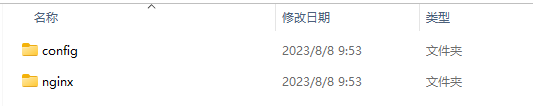

2.3.1.2 上传hi-ims-web配置并修改配置

将config与nginx目录,移动到/ZHD/apps/zhd/hi-ims-web目录下

修改nginx配置文件

## 将下面的URL连接地址,修改为服务器地址192.168.56.61

location /api {

rewrite /api/(.*)$ /$1 break;

proxy_pass http://192.168.56.61:9900;

proxy_read_timeout 12h;

proxy_http_version 1.1;

proxy_set_header Upgrade $http_upgrade;

proxy_set_header Connection “upgrade”;

proxy_set_header Host $host;

proxy_set_header X-real-ip $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

}

location /xxl-job-admin {

proxy_pass http://192.168.56.61:9910;

}

location /manager/druid {

proxy_pass http://192.168.56.61:8080;

}

location /ws/admin {

rewrite /ws/(.*)$ /$1 break;

proxy_pass http://192.168.56.61:8080;

proxy_read_timeout 12h;

proxy_http_version 1.1;

proxy_set_header Upgrade $http_upgrade;

proxy_set_header Connection “upgrade”;

}

location /ws/iot {

rewrite /ws/(.*)$ /$1 break;

proxy_pass http://192.168.56.61:8080;

proxy_read_timeout 12h;

proxy_http_version 1.1;

proxy_set_header Upgrade $http_upgrade;

proxy_set_header Connection “upgrade”;

}

location /hi-ims-standard {

proxy_pass http://192.168.56.61:9906;

}

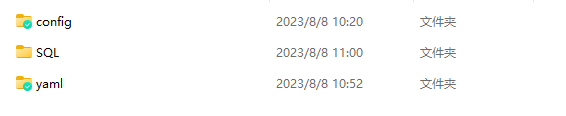

2.3.1.3 上传hi-ims-standard配置文件并修改配置

将config、yaml、SQL目录,移动到/ZHD/apps/zhd/hi-ims-standard目录下

修改config/application.yml

1、修改nacos IP地址与命名空间

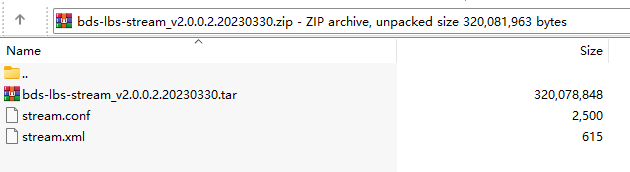

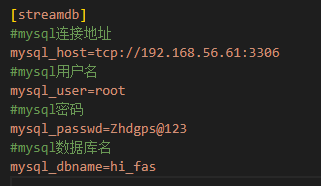

2.3.1.4 上传bds-lbs-stream镜像与配置文件

将bds-lbs-stream_v2.0.0.2.20230330.zip解压后上传到/ZHD/apps/zhd/bds-lbs-stream/config下面

1、加载镜像

docker load -i bds-lbs-stream_v2.0.0.2.20230330

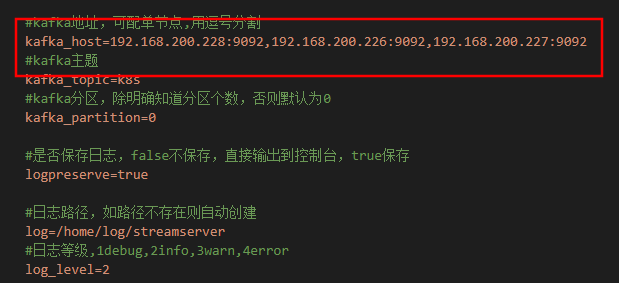

2、修改stream.conf配置文件

修改为192.168.56.61:9092

修改MySQL连接地址和库名,需要提前手动创建hi-fas数据库,无需手动创建数据表,数据表业务进程会自动创建

echo ‘create database hi_fas’ | mysql -uroot -pZhdgps@123

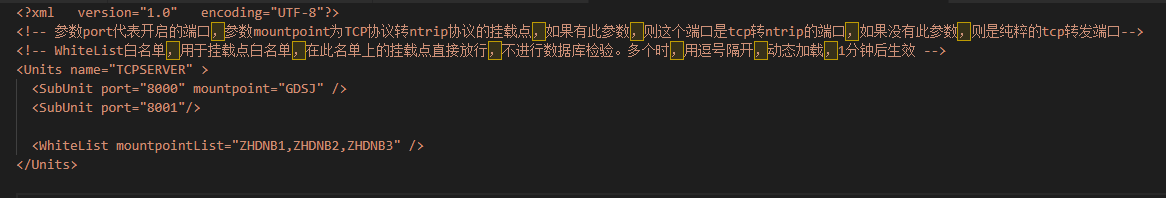

3、stream.xml文件

如果需要GGA数据,配置基站白名单,可后期配置

<WhiteList mountpointList=”该字段为基站设备ID”,”该字段为基站设备ID” /> 可配置多个

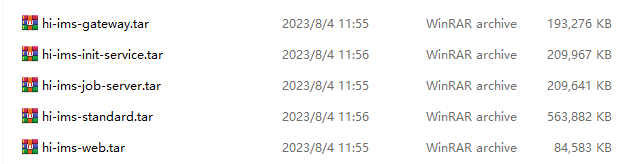

2.3.2 上传并加载业务镜像

# 将业务镜像包通过sftp软件上传到/tmp目录下加载

cd /tmp

docker load -i hi-ims-gateway.tar

docker load -i hi-ims-init-service.tar

docker load -i hi-ims-job-server.tar

docker load -i hi-ims-standard.tar

docker load -i hi-ims-web.tar

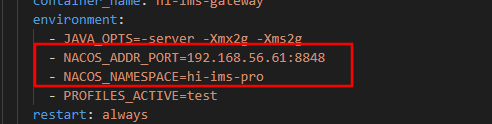

2.3.3 编辑业务启动yml文件

/ZHD/apps/zhd/hi-ims-standard/yaml下的两个yml文件(hi-ims_init_V2.2.3.yml,hi-ims_V2.2.3.yml)都要编辑修改。

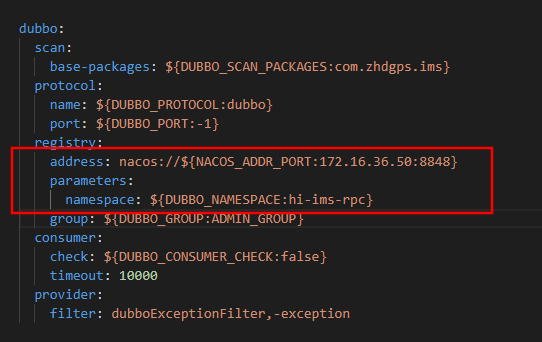

需要修改下面的nacos地址与命名空间

2.3.4 启动init模块

## 创建hi-ims数据库

echo ‘create database hi_ims’ | mysql -uroot -pZhdgps@123

cd /ZHD/apps/zhd/hi-ims-standard/yaml

docker-compose -f hi_ims_init_v2.2.3.yml up -d –remove-orphans

## 跟踪查看,业务进程日志

docker logs hi-ims-init-server –tail=10 -f

2.3.5 刷入基础数据

2.3.5.1 MySQL刷入数据

cd /ZHD/apps/zhd/hi-ims-standard/SQL

mysql -uroot -pZhdgps@123 hi-ims < R__usr_tenant_info.sql

mysql -uroot -pZhdgps@123 hi-ims < R__usr_role.sql

mysql -uroot -pZhdgps@123 hi-ims < R__usr_user_role_merge.sql

mysql -uroot -pZhdgps@123 hi-ims < R__usr_user_dept_merge.sql

mysql -uroot -pZhdgps@123 hi-ims < R__xxl_job_group.sql

mysql -uroot -pZhdgps@123 hi-ims < R__sys_data_permission.sql

mysql -uroot -pZhdgps@123 hi-ims < R__sys_resource.sql

mysql -uroot -pZhdgps@123 hi-ims < R__sys_role_resource.sql

mysql -uroot -pZhdgps@123 hi-ims < R__usr_dept.sql

mysql -uroot -pZhdgps@123 hi-ims < R__usr_info.sql

mysql -uroot -pZhdgps@123 hi-ims < R__usr_permission.sql

2.3.5.2 Clickhouse刷入数据

## 如果CK数据库采用dokcer部署则还需要安装 clickhouse-client 软件

sudo yum install -y yum-utils

sudo yum-config-manager –add-repo https://packages.clickhouse.com/rpm/clickhouse.repo

sudo yum install -y clickhouse-client-22.2.3.5

## 创建hi_ims_data库

echo ‘CREATE DATABASE hi_ims_data ENGINE = Atomic’ | clickhouse-client -u default –password Zhdgps@123

cd /ZHD/apps/zhd/hi-ims-standard/SQL

clickhouse-client -u default –password Zhdgps@123 -n < CK库创建.sql

2.3.6 启动其它业务模块

cd /ZHD/apps/zhd/hi-ims-standard/yaml

docker-compose -f hi_ims_v2.2.3.yml up -d –remove-orphans

docker ps | grep hi-ims

## 跟踪查看,业务进程日志

docker logs hi-ims-standard-static-gms –tail=10 -f